I once quoted Abraham Maslow in my note “Using predictive modeling as a hammer when the nail needs more thinking“, a problem that has since eased as focus shifts to causal modeling and optimization.

Now, if the problem is extracting handwritten digits from a PDF or an image, what’s the solution? I guess good old reliable OCR? There is nothing generative about extraction, so using an LLM may be moot, if not actively detrimental (due to hallucinations). OCR is widely available through cloud like AWS Textract and Google Cloud Vision, some of which also offer human validation.

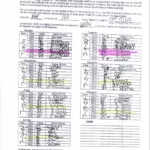

Instead, the Reuters team intriguingly chose the shiniest hammer, an LLM, to extract data from handwritten prison temperature logs. They uploaded 20,326 pages of logs to Gemini 2.5 Pro, followed by manual cleaning and merging. One big problem with this approach is the inevitable made-up text (hallucinations), which required the team to hand-code 384 logs.

So why not OCR but an LLM? All I can think of is, LLMs may be useful in extraction when the text is highly unstructured and contextual understanding is needed, neither of which seems to apply here. Surprisingly, the methodological note doesn’t even mention OCR as a solution.

Putting the tool choice aside, though, Reuters asks an important question here: “How hot does it get inside prisons?” and the answer is “Very.” I applaud the effort and data-centric journalism, and I recommend reading the story.

Credit for the image goes to Adolfo Arranz.