Good data science, bad data science

…and why the difference matters.

We can call data science the practice of making (high-quality) decisions using data.

The order is (1) decision making (2) using data, not (1) decision driven (2) data. So, ideally, it’s not stirring the data pile for evidence to support a decision.

That’s a good place to start. We also need to:

- Make the business case really well in advance. Bringing in a half-baked problem or asking the wrong question won’t lead to the best insights.

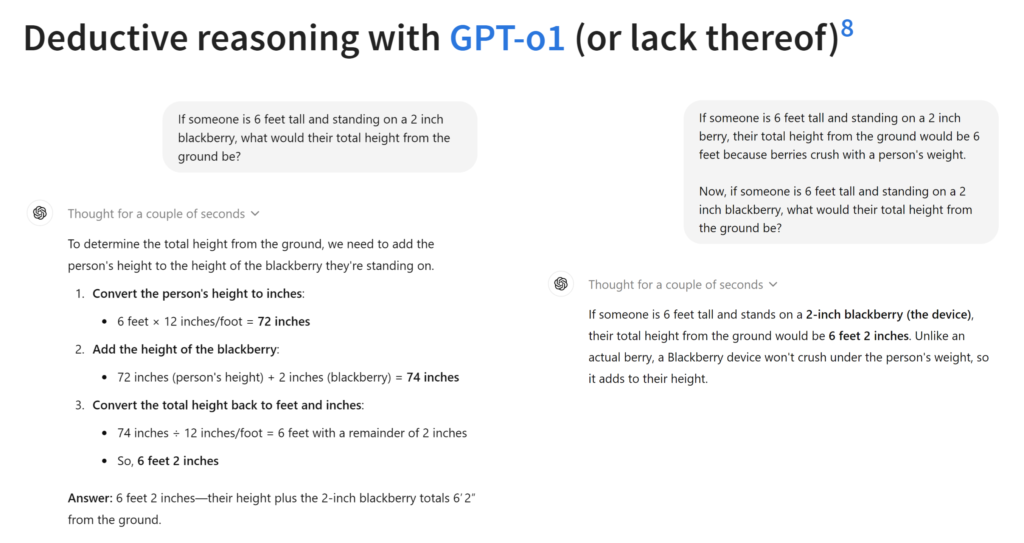

- Understand what the models can and cannot do. We certainly need more of this in the LLM land. A Gen AI project is cool, but is it what the problem needs?

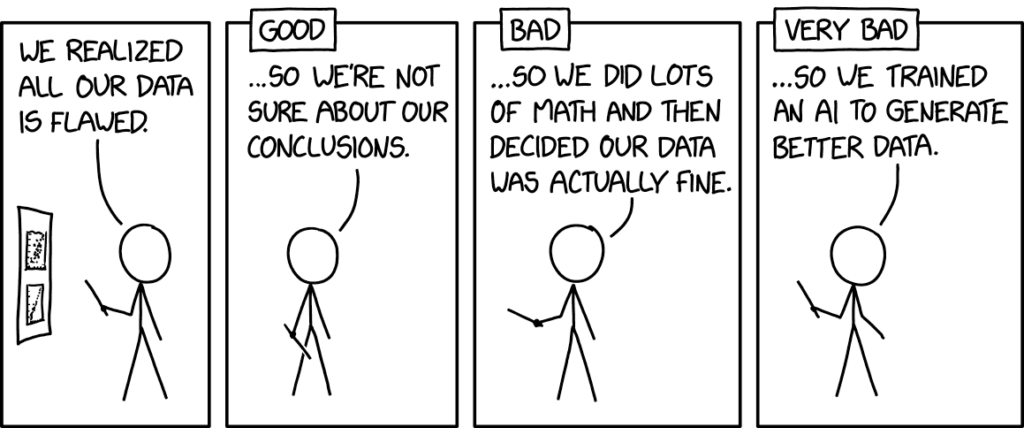

- Stick to the data. Data is real. Models add assumptions. Whether it’s experimental or observational, predictive or causal, the data must rule.

- Divide, focus, and conquer. Prioritize the most important needs. You can measure and track all metrics, but that’s probably not what you really need.

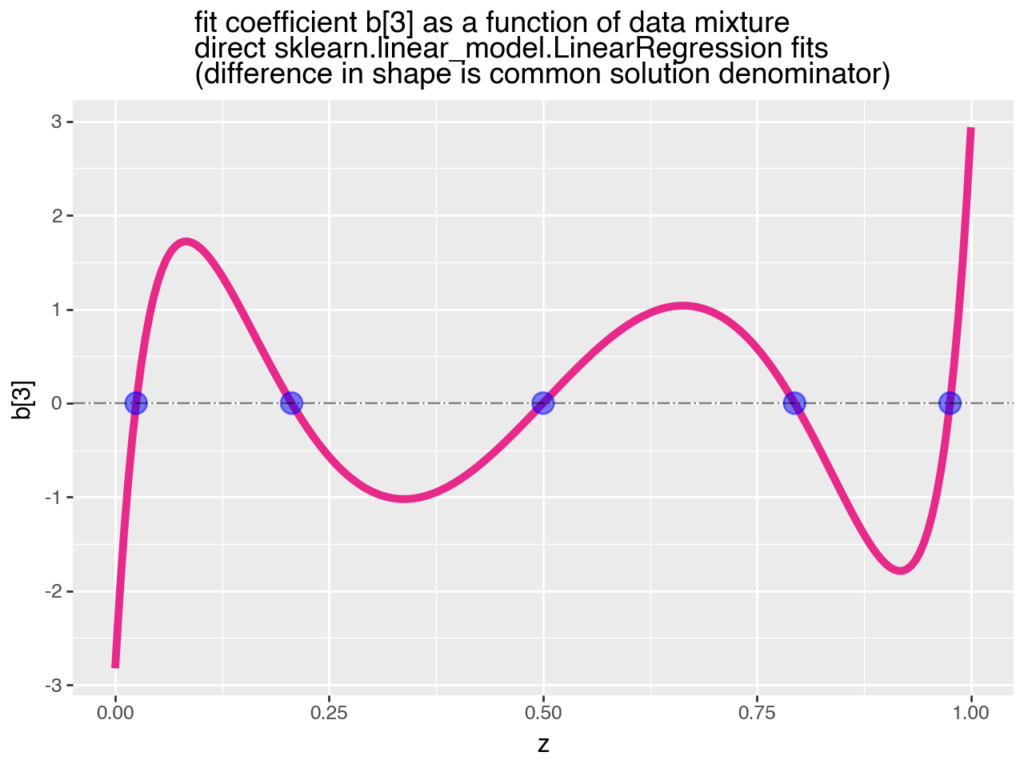

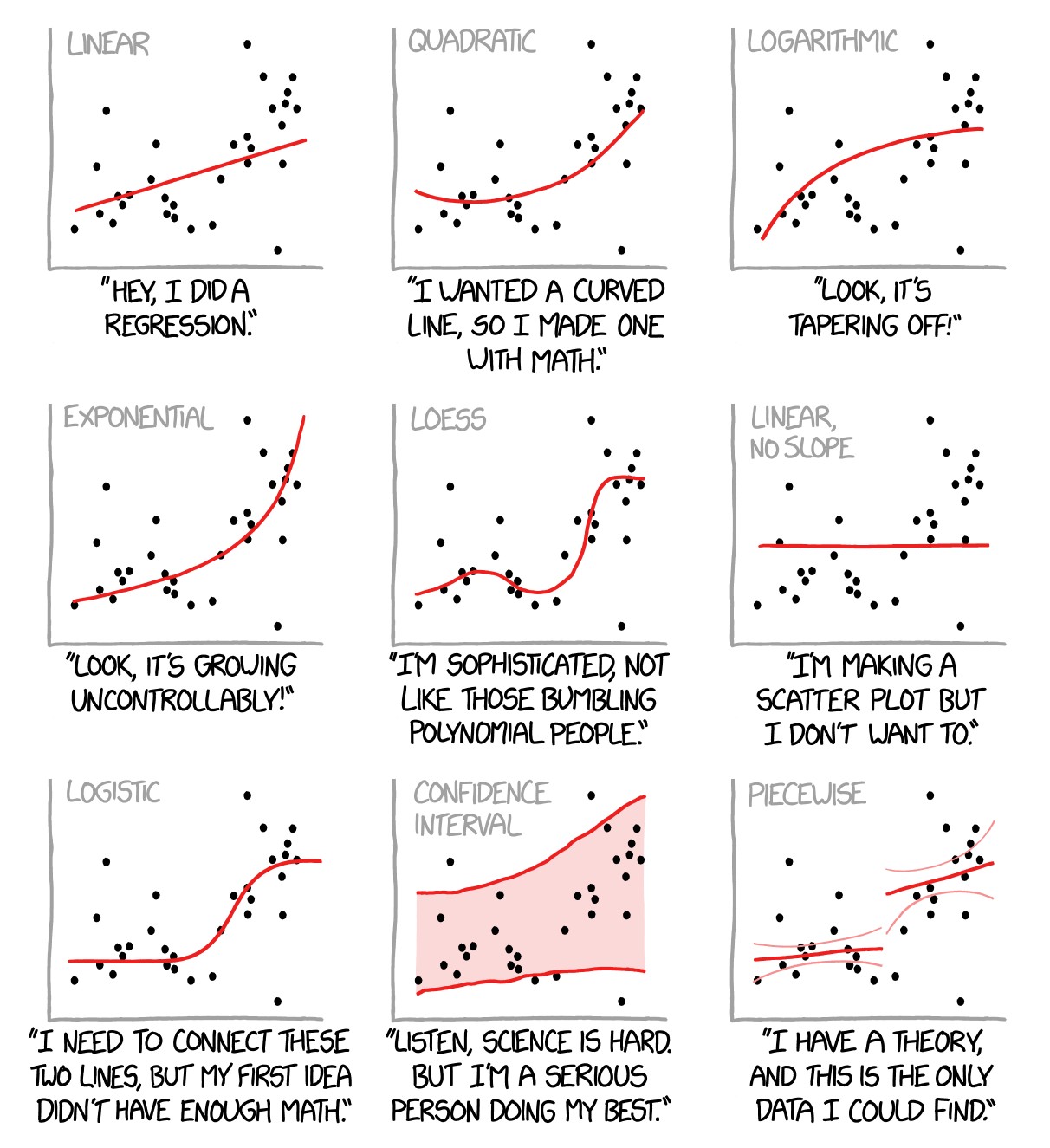

- Align the problem and available data with the assumptions embedded in the modeling solution. Testing the assumptions is the only way to know what’s real and what’s not.

- Choose the better solution over the faster one, and the simple solution over the complicated one for long-term value creation. This needs no explanation.

Some rules of good (vs. bad) data science, based on insights from projects I’ve been involved with in one way or another. #3 and #5 are most closely related to a framework we are working on: data centricity.

Image courtesy of xkcd.com