Ongoing debate: LLMs reasoning or not

There are now so many papers testing the capabilities of LLMs that I increasingly rely on thoughtful summaries like this one.

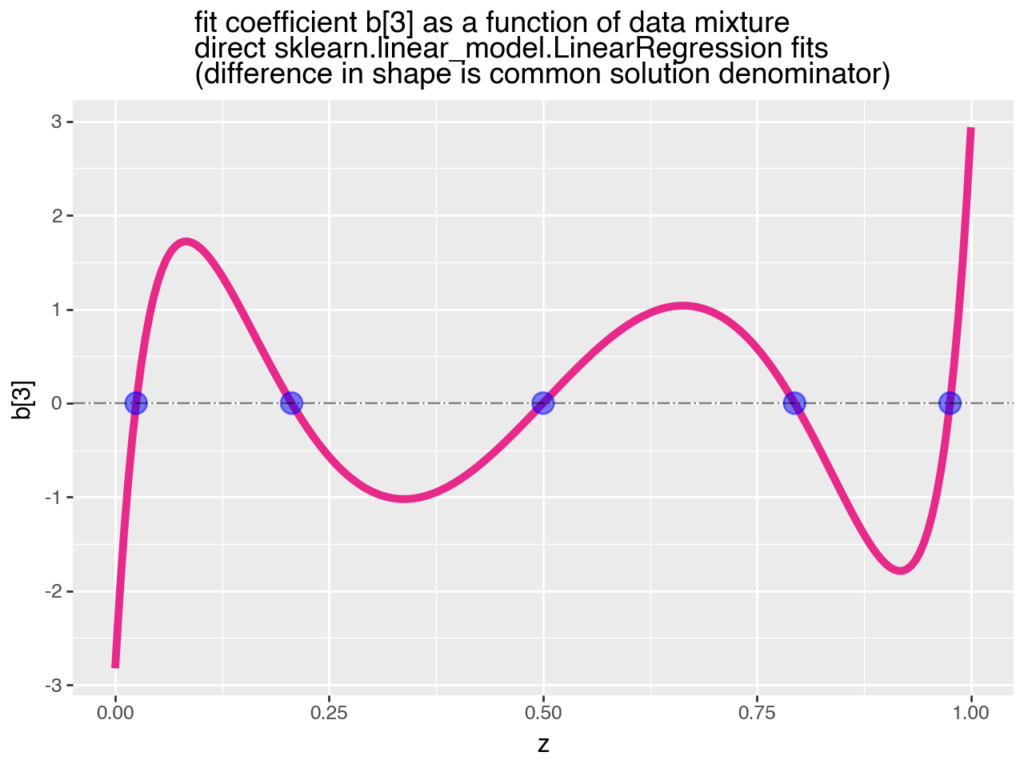

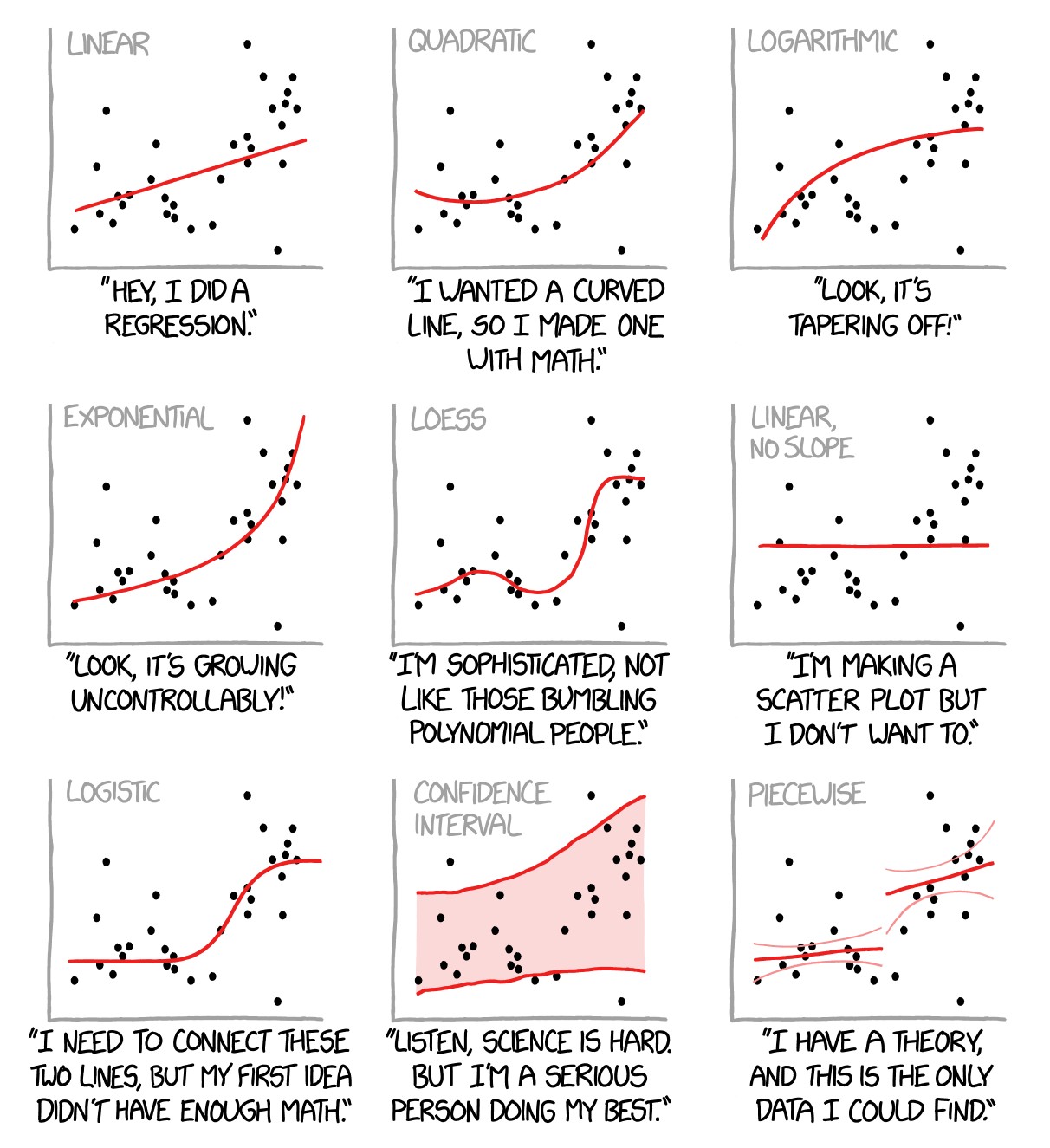

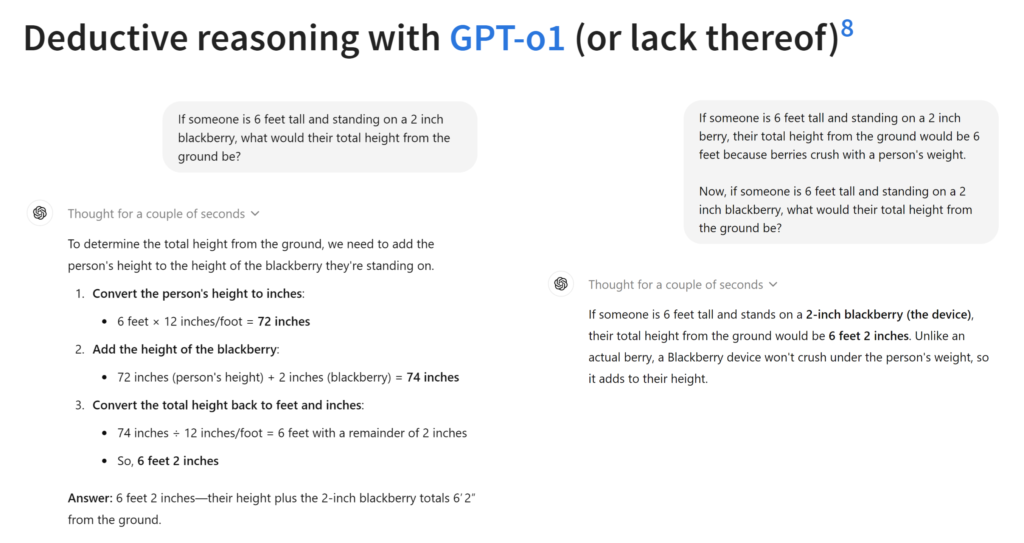

The word ‘reasoning’ is an umbrella term that includes abilities for deduction, induction, abduction, analogy, common sense, and other ‘rational’ or systematic methods for solving problems. Reasoning is often a process that involves composing multiple steps of inference. Reasoning is typically thought to require abstraction—that is, the capacity to reason is not limited to a particular example, but is more general. If I can reason about addition, I can not only solve 23+37, but any addition problem that comes my way. If I learn to add in base 10 and also learn about other number bases, my reasoning abilities allow me to quickly learn to add in any other base.

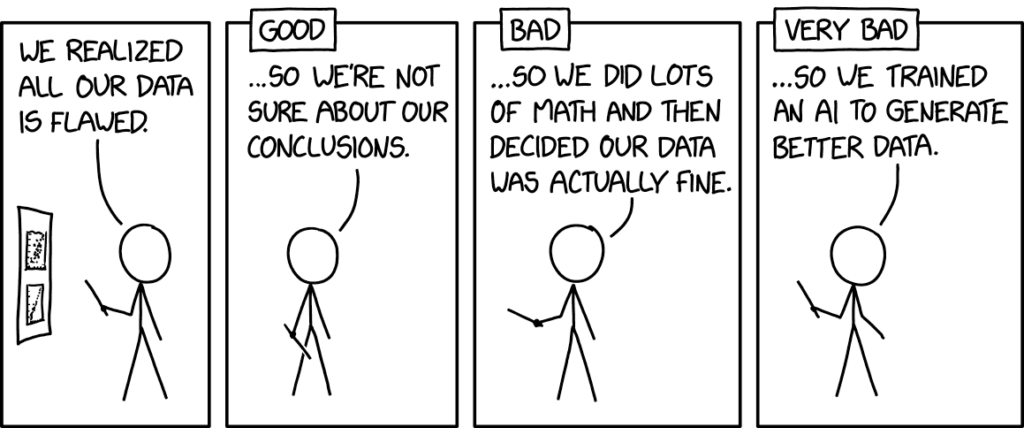

Abstraction is key to imagination and counterfactual reasoning, and thus to establishing causal relationships. We don’t have it (yet) in LLMs, as the three papers summarized here and others show (assuming robustness is a necessary condition).

Is that a deal breaker? Clearly not. LLMs are excellent assistants for many tasks, and productivity gains are already documented.

Perhaps if LLMs weren’t marketed as thinking machines, we could have focused more of our attention on how best to use them to solve problems in business and society.

Nonetheless, the discussion around reasoning seems to be advancing our understanding of our thinking and learning process vis-à-vis machine learning, and that’s a good thing.