When to normalize / apply weights

To me, this is interesting not because of the lack of transparency in methodology but the potential reason for the rankings to be wrong.

I want to believe that this is a mistake not fraud, but really? Applying the weights before normalizing the scores? And the Bloomberg Businessweek spokesperson says “the magazine’s methodology was vetted by multiple data scientists.”

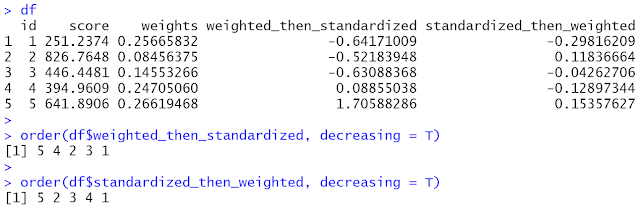

I have created a quick scenario as a reminder to my former (and current) students (posted in the comments as LinkedIn doesn’t allow here). In the example, the scores are standardized across the five items (which are randomly generated and assigned weights). In the Businessweek rankings, standardization is supposed to be across institutions so that the weights proportionately affect each institution’s score on the corresponding item. Nevertheless, the source of the error is the same. If the weights are applied before normalizing the data, the scores are adjusted by the weights disproportionately. Ranking changes accordingly.