New chapters in the Causal Book: RD and DoubleML

In OTA loyalty programs, customers typically earn “Platinum” status once their spending exceeds a threshold (e.g., Expedia’s One Key or Booking.com’s Genius). Platinum comes with perks to incentivize higher spend.

Regression Discontinuity is an ideal framework to estimate the causal effect: Do customers actually spend more because of the Platinum status, and how much?

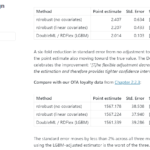

In newly published chapters of the Causal Book, we simulate data using the Synthetic Data Vault to estimate the incremental spend driven by Platinum status. While a naïve comparison suggests a $2,100 increase in spend, the RD estimate via rdrobust yields $1,567 at the threshold, remarkably close to the ground truth of $1,500.

We also explore bandwidth selection, highlighting the bias-variance tradeoff when choosing between MSE-optimal and CER-optimal bandwidths.

Next is the role of DoubleML. In a clean, sharp RD design like this, rdrobust already uses a local polynomial fit around the threshold. But do we gain precision from flexible covariate adjustment? We find that DoubleML does worse (see Oh my! DoubleML is worse for the RD design). We then run a diagnostic to understand why.